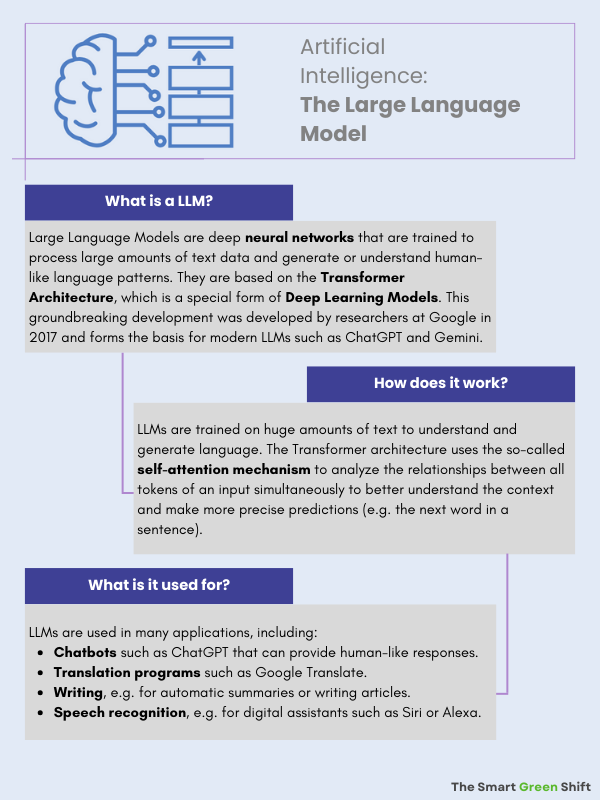

Artificial intelligence (AI) has developed rapidly in recent years and is now present in almost all areas of life. Voice assistants, personalized product recommendations, autonomous vehicles, and medical diagnostics are just some of the diverse fields of application in which AI enables enormous progress and groundbreaking developments. Its great potential is also praised in terms of sustainability: optimized processes enable resources to be used more efficiently, agricultural yields to be increased, and energy consumption in intelligent power grids to be adjusted (more on this here). So-called LLMs (Large Language Models), such as ChatGPT or Google’s Gemini, open up completely new possibilities for research, business, and society. But while technological innovations are being celebrated, their ecological impact is also increasingly coming into focus.

Artificial intelligence initially sounds like an abstract, intangible concept, but it is based on a very real, physical infrastructure that brings with it progress and opportunities as well as challenges. The large transformer models, in particular, are at the center of the discussion about sustainability and resource consumption. While AI has existed in various forms for a long time, powerful LLMs have only recently gained importance. Since the publication of models such as BERT, GPT, and T5 in 2017, the resource consumption of these technologies has also become the focus of scientific research. Studies such as Energy and Policy Considerations for Deep Learning in NLP (Strubell et al., 2019) and more recent work by Google and OpenAI have provided the first reliable figures on energy requirements and CO₂ footprints. Nevertheless, many aspects remain unclear, especially when it comes to the entire life cycle of the systems—from hardware production to training and application to maintenance.

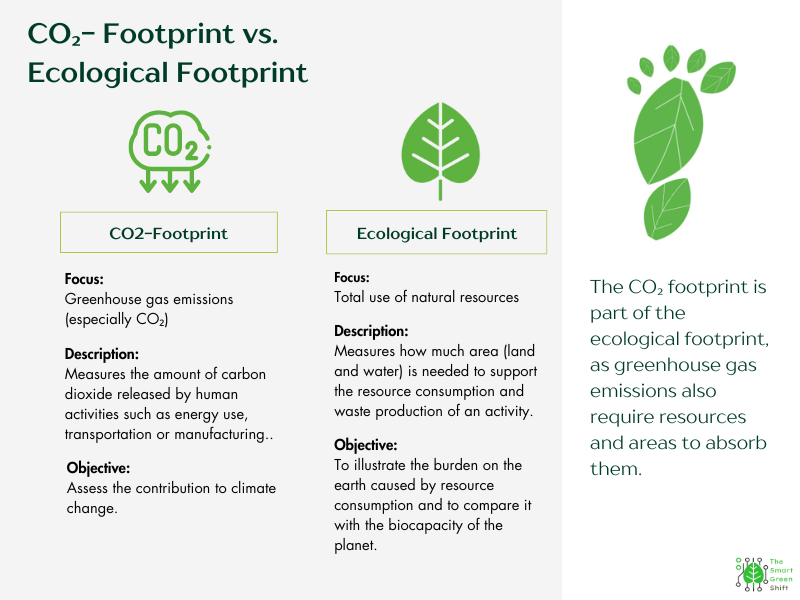

Although many figures and calculations are not yet entirely meaningful due to a lack of transparency and valid data, the dimensions of resource consumption are already becoming clear. The aim of this article is to provide an approximate overview of the ecological footprint of modern AI models.

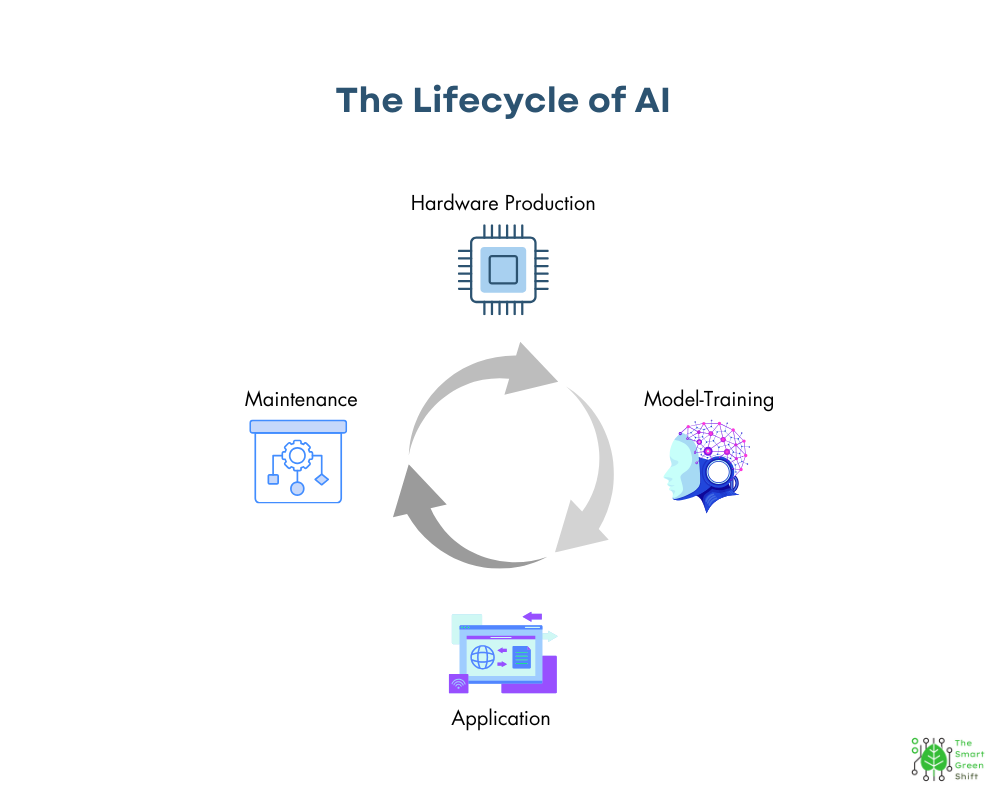

Resource Consumption in the Life Cycle of Artificial Intelligence

The resource consumption of AI can be divided into four central phases. The first phase concerns the hardware production required for the physical infrastructure, which consumes large amounts of energy and raw materials—especially in the manufacture of specialized chips and the construction of data centers. In the second phase, training, gigantic amounts of data are processed, which causes high electricity and water consumption. The third phase involves the application of AI, i.e., the interaction with users, whereby every request to a model such as ChatGPT continues to require energy and water. Finally, the maintenance of these systems also requires continuous computing power and infrastructure, which means that resource consumption remains permanently high.

Hardware Production: High Raw Material Expenditure for the Production of Complex Systems

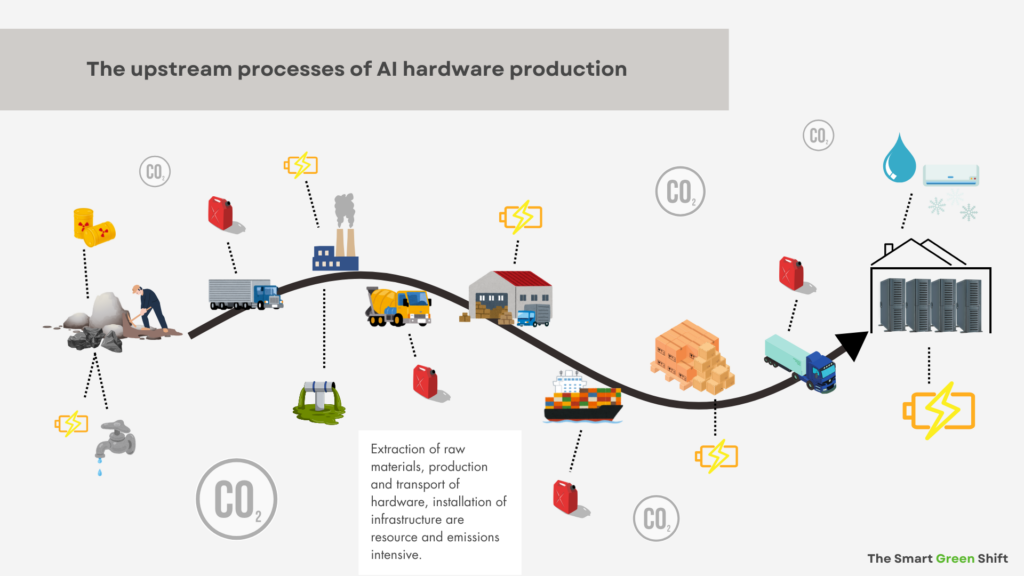

The physical infrastructure of modern AI applications is hardware that is specially developed for computationally intensive tasks. The production of AI accelerators and other specialized high-performance AI processors is an energy-intensive and resource-consuming process. Semiconductor manufacturing requires highly pure materials and complex chemical processes. Not only are rare metals such as copper, tantalum, and rare earths required, but also large amounts of energy and water that are used to mine and process the materials. The mining of raw materials often takes place in regions where there is a lack of strict environmental and social standards. The resulting contaminated soil and poisoned groundwater have a negative impact on ecosystems, and people suffer health consequences.

The components are then manufactured in clean rooms under strictly controlled conditions, which further increases energy requirements. In addition, the production processes of semiconductors are often associated with a high proportion of pollutants, such as chemicals used in etching and the application of thin layers.

A data center consists not only of servers, but also of complex air conditioning and energy supply systems. The production of these components is also resource-intensive. The entire production cycle is also dependent on a long supply chain that includes both the transport and storage of raw materials and finished products. This logistics chain is often organized globally, which further increases the CO₂ footprint.

A large part of the resource consumption of AI is therefore due to the upstream processes such as manufacturing, transport, and installation of the necessary infrastructure. All of these factors contribute to the fact that hardware production for AI applications is one of the most ecologically damaging phases in the life cycle.

The subsequent phases of training and application usually take place in different data centers or on different hardware. The reason for this is the different requirements of both processes. This further increases resource consumption.

Training of the Models

Once the physical infrastructure or hardware has been manufactured and is ready for use, the training phase begins. Training large language models is particularly energy-intensive. In order to learn the complex relationships of human language, neural networks with billions of parameters are trained over days, weeks, or even months on specialized computing clusters. This process requires the use of large amounts of electricity. Modern data centers used for such training runs often work around the clock, requiring enormous amounts of energy and water not only to operate the hardware but also to cool the devices. Cooling is necessary to dissipate the heat generated by the intensive computing power and to keep operations stable. This leads to additional resource requirements, which further increases the ecological footprint.

A study by the US newspaper Washington Post in collaboration with the University of California in Riverside looked at the resource consumption when training AI and calculated that 700,000 liters of water were needed for cooling purposes in Microsoft’s data centers to train OpenAI’s GPT-3. Meta’s language model Llama-3 consumed far more water, with 22 million liters reportedly being used for training.

A key factor in the high energy consumption is the number of calculations that must be carried out during training. Each weight in a neural network is adjusted in many iterations, which leads to an exponential increase in computing operations. It is the combination of high computing intensity and long training times that lead to such high consumption of electricity and water when training AI models.

Application: AI in Use

If the AI model has been successfully trained, it is ready for use. This phase of the application is called the inference phase: the model reacts to inputs and generates results. This requires constant calculations in real time. In terms of resources, inference requires energy — i.e. electricity — as well as electricity and water for cooling the servers.

At first glance, inference seems to be the part of the AI life cycle where resource consumption is the lowest, but this impression is deceptive. Although the energy consumption per individual request to a language model is low, it is the growing flood of requests that causes energy and water consumption to skyrocket. The following examples illustrate this: The US Electric Power Research Institute (EPRI) has calculated that a single request to ChatGPT has an electricity consumption of 0.0029 kilowatt-hours. This is ten times the energy requirement of a conventional Google search, which is around 0.0003 kWh. This figure of 0.0029 kWh sounds relatively harmless, and viewed in isolation, it is. But it becomes really impressive when you scale it up: The trading website BestBrokers has made the following calculation based on the information from OpenAI and the consumption results from EPRI: Around 1 billion requests are made to ChatGPT in one day, which results in electricity consumption of 2.9 million kWh per day.

Over the course of a year — assuming the number of users and requests remains the same — that would be over 1 billion kWh or 1 TWh (terawatt-hour). For comparison: According to the American Energy Information Administration (EIA), an average US household consumes around 29 kWh per day. ChatGPT, therefore, consumes 100,000 times more energy than an average household. In reality, however, consumption is likely to be much higher if you look at the annual increase in active users. OpenAI recently announced that its chatbot ChatGPT has reached 300 million active users per week. That is twice as many as in September 2023. Constantly added new features such as audio, video, and image creation are also likely to increase the number of users considerably.

The aforementioned study by the Washington Post and the University of California also deals with the resource consumption of AI systems during inference. To do this, they examined energy and water consumption. Their research showed that creating a simple 100-word email with ChatGPT consumes 0.14 kWh and half a liter of water to cool the infrastructure. Extrapolated over a year, this would be 7.5 kWh and 27 liters of water for one email per week. Here, too, it only becomes really impressive when these numbers are extrapolated: If 10% of US workers create an email with 100 words per week with ChatGPT, the energy requirement would correspond to the total electricity consumption of all households in Washington D.C. over 20 days and the water consumption of 435 million liters, which is the consumption of all households in the state of Rhode Island for a year and a half, according to the Washington Post.

Even these conservatively calculated figures are enough to get an idea of the dimensions of resource consumption during inference.

Since 2020, the electricity consumption of data centers has increased continuously. A key driver of this development is the increasing use of artificial intelligence (AI). Goldman Sachs Research estimates that data center electricity demand will increase by 160% by 2030. Data centers currently account for 1-2% of all electricity worldwide for all IT workloads, including AI, but this percentage is likely to rise to 3-4% by the end of the decade due to the increase in AI development and use. By 2028, AI could account for about 19% of data center electricity demand. In the US, for example, data centers accounted for 3% of total electricity consumption in 2022, but this figure could rise to 8% by 2030 with the rise of AI, almost tripling. Goldman Sachs Research estimates that the total increase in data center electricity consumption due to AI will be about 200 terawatt-hours per year between 2023 and 2030. As a result of these developments, carbon dioxide emissions from data centers could more than double between 2022 and 2030.

Maintenance of the Systems

After an AI system has been put into operation, the phase of maintenance and continuous optimization begins. This includes regularly updating software and hardware to close security gaps, integrate new functions, optimize performance, and replace worn-out hardware. Maintenance is an often underestimated but essential aspect of the life cycle of AI systems that also has a significant impact on the ecological footprint.

Continuous maintenance also includes monitoring the systems to prevent failures and ensure constant operational readiness. This is often done via additional computing resources operated in the data centers. Even if the energy consumption of individual maintenance operations may be relatively low, it adds up to considerable consumption over the lifetime of an AI system. In addition, replacing outdated hardware leads to additional resource expenditure. New generations of processors and memory components are needed to meet the increasing demands, which in turn leads to new production cycles that further increase the ecological footprint.

Another important point in the maintenance phase is the updating of the underlying algorithms. Advances in research often lead to new, more efficient methods, but these often require a new phase of training and integration into existing systems before they can develop their efficiency. The complexity of modern AI systems makes it almost inevitable that maintenance work at regular intervals leads to additional burdens on the energy and resource balance.

Challenges in Calculating the Ecological Footprint

The ecological footprint and the CO₂ balance of the entire life cycle of AI models, especially transformer models, cannot currently be calculated precisely. This is mainly due to incomplete information on the actual resource consumption. While there are now isolated figures on energy consumption for certain phases, such as inference, resource-intensive hardware production in particular remains largely opaque.

In addition, several factors make accurate measurement difficult: First, the energy consumption of AI training processes is often not traceable because data centers use different energy sources and companies rarely disclose detailed consumption data. Second, energy requirements vary considerably depending on hardware, model size, and training duration. Third, emissions arise not only from direct electricity consumption, but also from the manufacture and disposal of hardware, for which there is no reporting obligation on sustainability in many parts of the world. In addition, there are no standardized methods to consistently assess the actual footprint.

As part of the Green Deal, the European Union has recently been making data center operators more responsible by creating more transparency about energy and resource consumption and the resulting emissions through reporting obligations. The EU Energy Efficiency Directive (EU) 2023/1791 requires owners and operators of data centers with a power requirement of the installed information technology of at least 500 kW to publish various information related to sustainability. These reporting obligations were further specified by the Delegated Regulation C/2024/1639 of March 14, 2024. The operators must now report certain data to a database provided by the European Commission. In addition, the Corporate Sustainability Reporting Directive (CSRD) came into force on January 1, 2024, which requires companies to disclose information on climate-related risks and social impacts. This directive is also part of the European Green Deal and aims to increase the transparency and sustainability of companies. Also, the EU AI Act, a draft law by the European Union aimed at establishing uniform rules for the development, deployment, and use of Artificial Intelligence (AI) within the EU, also partially addresses sustainability aspects. While it is not explicitly a sustainability law, it does hold AI providers and developers accountable for sustainability considerations in some areas, including the need to take energy efficiency into account, with the goal of reducing the ecological footprint of AI applications.

Outlook

If you consider the enormous resource and especially energy consumption of artificial intelligence, you can certainly feel uneasy — especially in view of the rapid increase in its integration and application. Especially since there is currently a veritable “arms race” between nations in terms of AI. The major economic powers in particular are outdoing each other with investments in this technology. Immediately after his inauguration, US President Trump announced the “Stargate” project with an investment volume of half a trillion dollars, whereupon French President Macron followed suit and announced AI investments of 109 billion dollars. It does not take in-depth expertise to recognize that these developments will significantly increase environmental pollution, not to mention the ethical risks associated with AI.

However, the immense benefits that AI offers in numerous areas and the progress it enables are also undeniable. This is precisely why it is all the more urgent to closely examine its ecological impact, continuously monitor it, and develop sustainable solutions.

A promising approach was recently introduced: DeepSeek, a Chinese competitor to OpenAI’s ChatGPT. This model is causing a stir for several reasons: On the one hand, development was significantly cheaper than ChatGPT. On the other hand, some of DeepSeek’s models are open source, for example, available on GitHub. This allows the community to adapt and further develop the models for their own purposes. What is particularly noteworthy, however, is DeepSeek’s efficiency in terms of sustainability. It is based on a Mixture of Experts (MoE) architecture, which takes an innovative approach to reducing computational effort. Instead of activating all neurons at the same time, the model only uses a subset of specialized expert networks per token. This significantly reduces both the computational load and memory requirements. In addition, R1 optimizes attention calculation — a method that AI models use to identify relevant information in text — using FlashAttention techniques, which further reduces memory consumption and increases GPU efficiency. Figuratively speaking, the model does not always use the entire “brain,” but only activates the areas that are relevant for a specific task. Comparable to a team of experts in which only those specialists whose expertise is currently needed work on a project. Through this targeted activation, economical calculations, and GPU optimization, R1 can be powerful without causing the enormous energy consumption of other models such as ChatGPT.

All in all, a promising approach that can be built upon — both technologically and in terms of a more sustainable AI future.

Sources

The Washington Post: A bottle of water per email: the hidden environmental costs of using AI chatbots.From: https://www.washingtonpost.com/technology/2024/09/18/energy-ai-use-electricity-water-data-centers/ Retrieved: February 2, 2025

Bestbrokers: AI’s Power Demand: Calculating ChatGPT’s electricity consumption for handling over 365 billion user queries every year.From: https://www.bestbrokers.com/forex-brokers/ais-power-demand-calculating-chatgpts-electricity-consumption-for-handling-over-78-billion-user-queries-every-year/ Retrieved: February 2, 2025

Strubel et al: Energy and Policy Considerations for Deep Learning in NLP. From: https://aclanthology.org/P19-1355/ Retrieved: February 2, 2025

Pengfei et al: Making AI Less “Thirsty”: Uncovering and Addressing the Secret Water Footprint of AI Models. From: https://arxiv.org/pdf/2304.03271 Retrieved: February 2, 2025

Goldman Sachs Reserch: AI is poised to drive 160% increase in data center power demand.From: https://www.goldmansachs.com/insights/articles/AI-poised-to-drive-160-increase-in-power-demand Retrieved: February 2, 2025

Patterson et al: The Carbon Footprint of Machine Learning Training Will Plateau, Then Shrink. From: https://www.semanticscholar.org/reader/76cb108e37d9d2a06f5a49df04e993f5fb123c26 Retrieved: February 2, 2025

IÖW: KI verbraucht immer mehr Ressourcen: jetzt Nachhaltigkeit messen.From: https://www.ioew.de/news/article/ki-verbraucht-immer-mehr-ressourcen-jetzt-nachhaltigkeit-messen Retrieved: February 2, 2025

EU Artificial Intelligence Act From: https://artificialintelligenceact.eu/de/ Retrieved: February 2, 2025